Research

Overview

Most modern cosmological simulations use an approximate theory of gravity. This is Newtonian gravity, which describes gravitational interactions by an invisible force pulling anything with mass towards anything else with mass. This works pretty well in most situations we're used to on Earth, and actually in most situations in general. It begins to be a problem if you have something really massive (e.g. a black hole), or something small near something much more massive (e.g. the precession of the perihelion of Mercury around the Sun), or when we consider really large distances.

In cosmology our whole game is really large distances.

Einstein's theory of general relativity is a much better description of gravity in these problematic situations.

Newtonian gravity (and some extensions to it) is predominantly used in cosmology because it's much simpler. Current state-of-the-art cosmological simulations use a combination of a homogeneous, isotropic spacetime in general relativity and Newtonian dynamics.

Comparing what comes out of these simulations to observations means we can use them to test and improve our current best-fit cosmological model, the Lambda Cold Dark Matter model.

The instruments we use to observe the Universe are constantly being improved. This means our data are getting more and more precise. As our errorbars get smaller, we're starting to notice some peculiarities between what we see and what we expected to see, based on our cosmological model. One suggestion (among many) is that this could be because we're not using general relativity in full.

Newtonian dynamics describes the nonlinear gravitational collapse of matter extremely well. But pairing this with a homogeneous, isotropic spacetime means that this spacetime is unaffected by the matter on top of it. Effects such as curvature and inhomogeneous expansion are not properly captured in these simulations. These will have a direct effect on our observations, since they both affect the path of light rays as they travel towards us. But, how large is this effect? Is it important as the precision of our observations improve? Could it already be having an effect on what we observe?

Numerical relativity is a way to solve Einstein's equations in full on the computer, so we don't need to make any simplifying assumptions about the spacetime, or the interaction between the matter and that spacetime. I do large-scale cosmological simulations of nonlinear structure formation using numerical relativity. Simulations like this are extremely new, and were only done for the first time a few years ago.

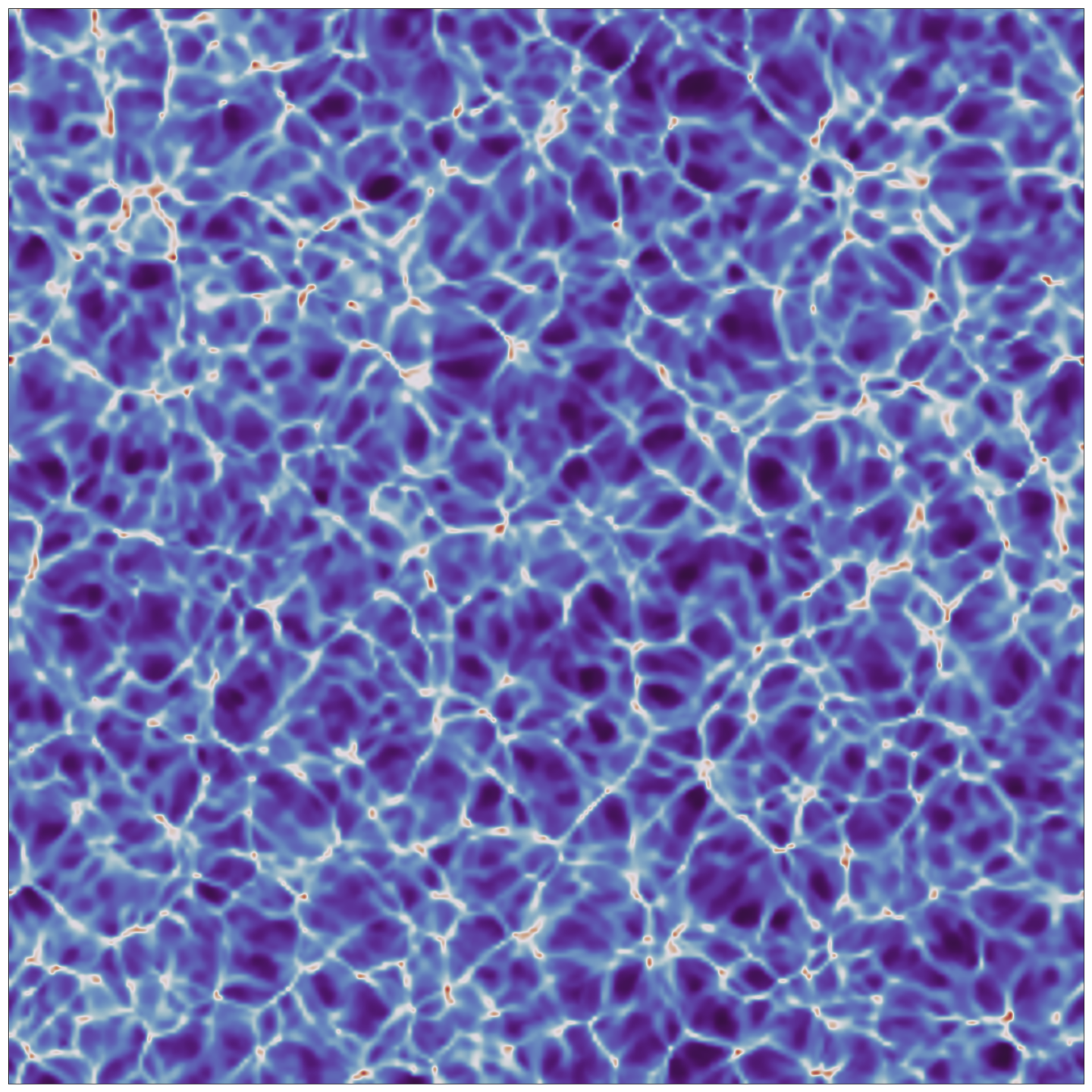

The image on the left is a snapshot from the end of one of my simulations using numerical relativity. Each side is 1 giga-parsec long, or about 30 billion trillion kilometers. The colours represent how dense the Universe is at different points. Red regions are the most dense and can be thought of as huge clusters of galaxies, white regions are medium density and can be thought of as thin filaments of galaxies, and dark blue regions are the least dense and can be thought of as huge regions that are almost completely empty, known as voids.

This simulation shows the large-scale cosmic web similar to Newtonian simulations, but with a difference: the spacetime in this simulation is curved according to the matter on top of it. In getting here, matter and spacetime have been in constant communication.

The image on the left is a snapshot from the end of one of my simulations using numerical relativity. Each side is 1 giga-parsec long, or about 30 billion trillion kilometers. The colours represent how dense the Universe is at different points. Red regions are the most dense and can be thought of as huge clusters of galaxies, white regions are medium density and can be thought of as thin filaments of galaxies, and dark blue regions are the least dense and can be thought of as huge regions that are almost completely empty, known as voids.

This simulation shows the large-scale cosmic web similar to Newtonian simulations, but with a difference: the spacetime in this simulation is curved according to the matter on top of it. In getting here, matter and spacetime have been in constant communication.

Simulations without simplifying assumptions will allow us to fully quantify any and all of the general-relativistic effects we expect to see in upcoming precision cosmological data, and maybe in existing data too.